Why Most AI Projects Fail Before Production in 2026

Discover why most AI projects fail before reaching production and learn the real challenges behind AI scalability, workflow reliability, infrastructure, security, monitoring, and operational success in 2026.

Vayqube Team

Author

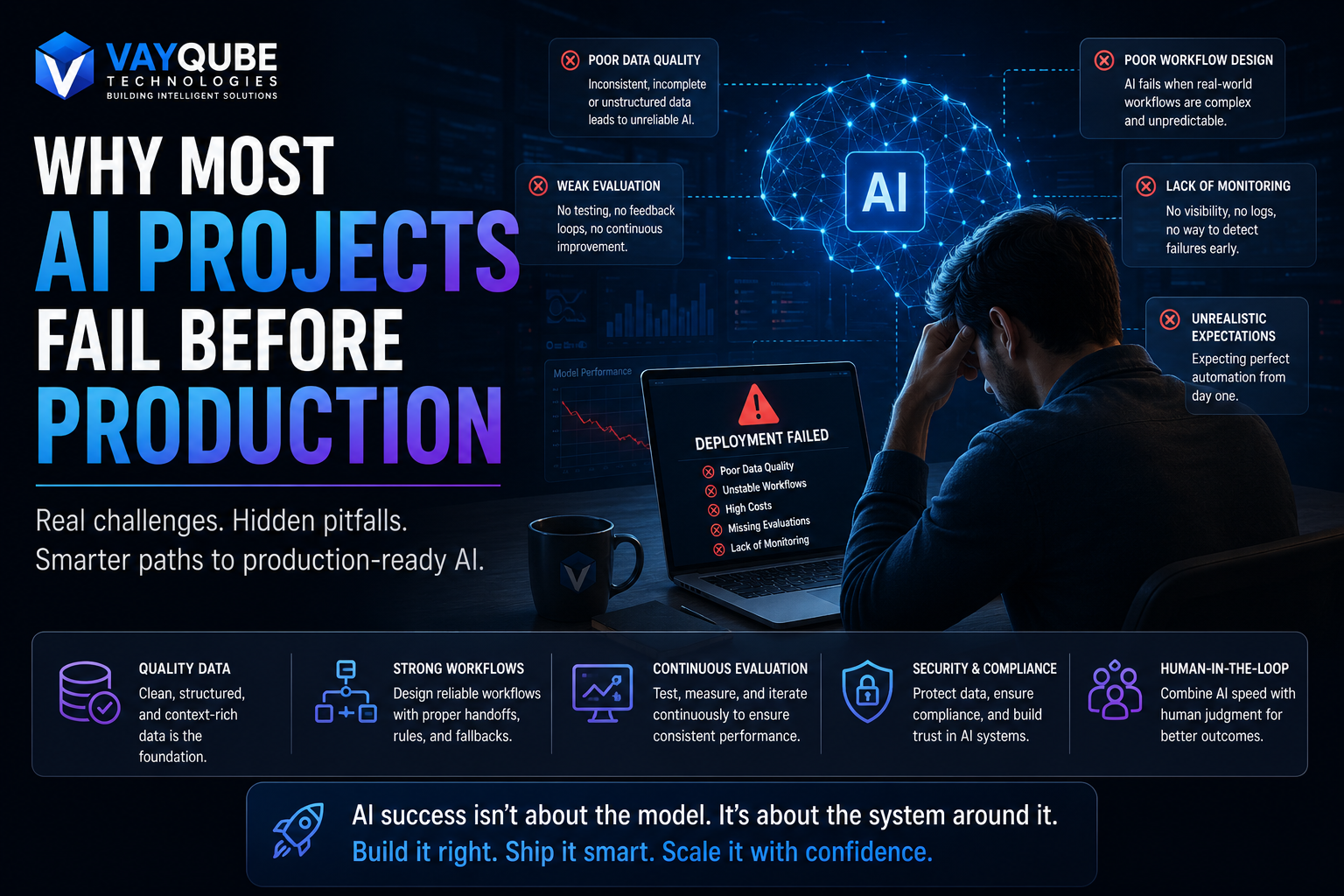

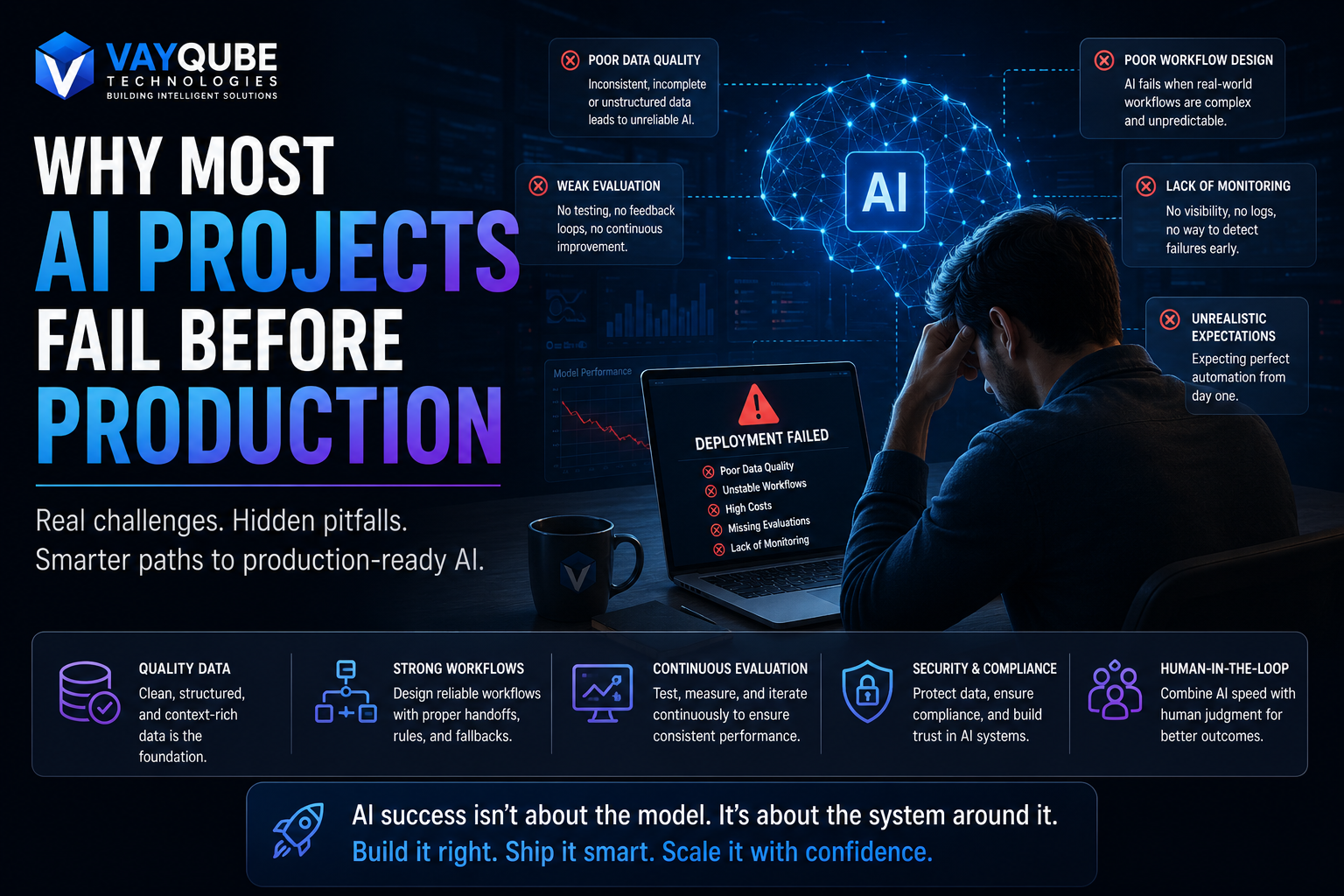

Why Most AI Projects Fail Before Production

AI adoption is accelerating rapidly across startups, SaaS companies, enterprises, and operational teams. Businesses everywhere are experimenting with AI copilots, automation systems, AI agents, customer support assistants, and workflow intelligence platforms. Yet despite massive excitement around artificial intelligence, a large percentage of AI projects never successfully reach production.

Many companies build impressive demos that work well in controlled environments, but once they attempt real-world deployment, problems begin to appear. Costs increase unexpectedly, workflows break, responses become unreliable, integrations fail, operational visibility disappears, and teams struggle to maintain consistency as usage scales.

This article is for founders, CTOs, product teams, operations leaders, and enterprise decision-makers evaluating AI adoption. You will learn why so many AI projects fail before production, what technical and operational mistakes businesses commonly make, and how modern companies are building AI systems that are scalable, reliable, and production-ready.

Quick Summary

- Most AI projects fail before production because businesses focus on demos and models instead of operational infrastructure, workflow reliability, data quality, and real-world scalability.

- The biggest business risks include rising infrastructure costs, unreliable outputs, poor integrations, security concerns, and operational instability.

- Before building AI products, businesses should first evaluate workflows, operational requirements, monitoring systems, and long-term scalability instead of focusing only on model selection.

What Teams Should Evaluate First

| Area | What to check | Why it matters |

|---|---|---|

| Business goal | Revenue, efficiency, risk reduction, user experience | Keeps the article tied to real outcomes |

| Users | Founders, CTOs, operations, sales, customers | Makes examples more relevant |

| Technology | Stack, integrations, data, security | Helps readers understand implementation tradeoffs |

| Delivery | Timeline, team, QA, launch, support | Prevents thin advice and makes the article actionable |

Main Section One

Why AI Demos Often Fail in Real Production Environments

Building an AI demo is relatively easy today.

Modern APIs and AI models allow developers to create:

- AI chatbots

- AI copilots

- AI agents

- Workflow automation systems

- AI search tools

within days or weeks.

The problem is that production systems are fundamentally different from demos.

Real businesses require:

- Operational reliability

- Security controls

- Scalable infrastructure

- Consistent responses

- Workflow visibility

- Human escalation systems

- Monitoring and analytics

- Compliance management

Most AI projects fail because teams underestimate these operational requirements.

Common Reasons AI Projects Fail

1. Poor Data Quality

AI systems are only as effective as the data they access.

Businesses often have:

- Fragmented databases

- Outdated documentation

- Inconsistent workflows

- Missing operational context

- Poor knowledge organization

This leads to unreliable AI outputs and weak user trust.

2. Weak Workflow Design

Many AI systems work well in isolated testing environments but fail when connected to real operational workflows.

For example:

- AI cannot handle escalation logic properly

- Human handoffs fail

- Automation loops break

- API dependencies become unstable

- Operational visibility is missing

The AI itself is not always the problem — workflow orchestration often is.

3. Over-Focus on Models Instead of Infrastructure

Many businesses spend too much time comparing:

- OpenAI vs Claude

- RAG vs Fine-tuning

- Model benchmarks

while ignoring:

- Monitoring

- Security

- Retrieval quality

- Workflow reliability

- Logging

- Scalability

- Governance

Production AI is primarily an infrastructure challenge, not just a model-selection challenge.

Practical Steps

- Start with one high-impact operational workflow instead of trying to automate everything.

- Organize business knowledge, APIs, and operational systems before adding AI.

- Build strong monitoring, logging, and escalation systems early.

- Test workflows using real operational scenarios instead of controlled demos.

- Add human oversight layers for sensitive or complex operations.

Main Section Two

Why Operational Infrastructure Matters More Than AI Features

Most successful AI systems in production are not simply “smart.”

They are operationally reliable.

Businesses often underestimate how much infrastructure is required around AI systems to maintain production quality.

AI Systems Need Continuous Evaluation

AI behavior changes over time because:

- Business data evolves

- User behavior changes

- APIs change

- Knowledge bases expand

- Workflows become more complex

Without continuous evaluation and monitoring, AI quality degrades quickly.

Modern AI systems require:

- Feedback loops

- Prompt optimization

- Retrieval evaluation

- Operational analytics

- Human review systems

- Error tracking

- Usage monitoring

Security and Compliance Challenges

As AI systems gain access to operational data, businesses must address:

- Access control

- Sensitive customer data

- Compliance requirements

- Authentication systems

- Audit logs

- API security

- Data privacy

This becomes especially important in:

- FinTech

- Healthcare

- Enterprise SaaS

- Internal operations

- Customer support systems

AI Projects Also Fail Due to Unrealistic Expectations

Some businesses expect AI to fully automate operations immediately.

In reality, the most successful systems usually combine:

- AI automation

- Human review

- Operational controls

- Structured workflows

- Incremental rollout strategies

AI adoption works best when businesses improve workflows gradually instead of attempting full automation overnight.

The Future Is AI Infrastructure, Not Just AI Features

The companies succeeding with AI in 2026 are not simply adding AI chat interfaces.

They are building:

- AI infrastructure

- Workflow orchestration systems

- Operational intelligence layers

- Retrieval systems

- Automation pipelines

- Human-AI collaboration models

This is what separates scalable AI businesses from short-term demos.

Practical Example

Imagine a SaaS company building an AI customer operations platform.

Initially, the company launched a chatbot demo that answered customer questions using basic prompts. Early feedback looked promising.

However, after deployment:

- AI responses became inconsistent

- Customer context retrieval failed

- Escalations broke

- API limits caused delays

- Monitoring was missing

- Support teams lost operational visibility

The company later redesigned the architecture by:

- Adding structured retrieval systems

- Improving workflow orchestration

- Integrating operational monitoring

- Building escalation layers

- Adding human review workflows

- Improving security controls

Only after strengthening the operational infrastructure did the AI system become production-ready.

A similar operational architecture can be seen in systems like the Admin Dashboard, where visibility, workflow control, centralized operations, and scalability are critical for production-grade systems.

Related Vayqube Resources

FAQ

Why do many AI projects fail after the demo stage?

Most AI demos operate in controlled environments with limited complexity. Real production environments require scalability, workflow reliability, integrations, monitoring, security, and operational governance, which many businesses underestimate during early development.

Is choosing the best AI model the most important factor?

No. While model quality matters, production AI success depends more on operational infrastructure, workflow orchestration, data quality, monitoring systems, and scalability architecture than model benchmarks alone.

How can startups reduce AI project failure risk?

Startups should begin with focused workflows, implement AI gradually, prioritize operational visibility, maintain human oversight, and build strong infrastructure around retrieval, monitoring, integrations, and security before scaling automation aggressively.

Next Step

The future of AI belongs to businesses that build reliable operational systems instead of isolated AI demos. Companies that focus on workflow scalability, infrastructure quality, monitoring, and human-AI collaboration will gain a major competitive advantage as AI adoption continues to grow.

If your business is evaluating AI infrastructure, automation systems, AI agents, or scalable production-ready AI architecture, the next step is to talk to a Vayqube solution architect.